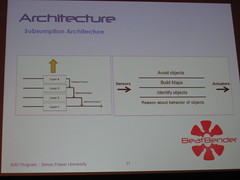

Aaron Lewisohn presented his system BeatBender and showed how it could generate rhythms. The application for trying out different rule sets for the rhythm generation seemed very tempting to play with. In the QA I asked whether he thought it would be feasible to use it in another system. It would be interesting to give the BeatBender real-time data from WoM and the MM and see what rule sets could be used to help out representing states of mind. (Would also be interesting to see how the principle of subsumption architecture meets the spreading activation network. I mean as a mental picture. Different node types could be connected to different hierarchical levels to the subsumption stuff, maybe according to decay rate.)

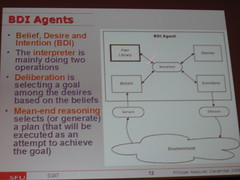

The second highlight for me was from Philippe Pasquier who, just as Aaron Lewisohn is from Simon Frasier University, presented “Shadow Agent”. The user stands in a room and puts her feet on the feet of the shadow. The movement of the user is assessed. The shadow follows the user, as shadows do, but starts to act independently, using a BDI style decision about what to do. A plan is chosen from a database.

Semi Autonomous Avatar in extremis!

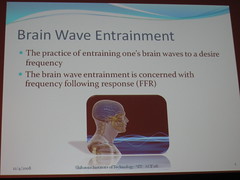

Other presentations I enjoyed that day was Peggy Weil’s and Nonny de la Peña’s “Avatar Mediated Cinema”, Bill Kapralos “: Dimensionality Reduced HRTFs: A Comparative Study” and Sittapong Settapat’s and Michiko Ohkura’s “An Alpha-Activity-Based Binaural Beat Sound Entrainment System using Arousal State Model”. I want to play with bineural beat sounds too!